AI Takes a Village — and a Tech Stack (and Someone to Run It)

Steven Palange

CAO CIO CSO & CISSP | Thought Leader | AI Integration & Governance Advisor to CIOs, CISOs, and CFOs Specialist in AI ROI, Risk, Compliance, and AI-Ready

Written by Steven Palange, CAO, CIO, CSO, & CISSP | Thought Leader | Helping CXOs & IT Leaders Solve Automation, AI, Cybersecurity, and Cloud with Proven, Scalable Solutions. E:steven_palange@tlic.com P: 401-214-5557

Special Section: Infographics, Video, & Audio Learning Guide that summarizes this Newsletter Article for Busy Professionals

🔊Listen to the Strategy Podcast

🎥Watch the Executive Briefing (Video)

Most organizations are not behind on AI anymore.

They’re overloaded.

Copilot is deployed. ChatGPT is approved. SaaS platforms quietly added AI features. Power users are experimenting beyond policy.

On paper, adoption looks healthy.

In reality, the pattern looks different:

- Productivity gains are inconsistent

- Risk is rising quietly

- Costs are creeping upward

- Accountability is unclear

And leadership keeps asking the same question:

“What AI are we actually running — and who owns it?”

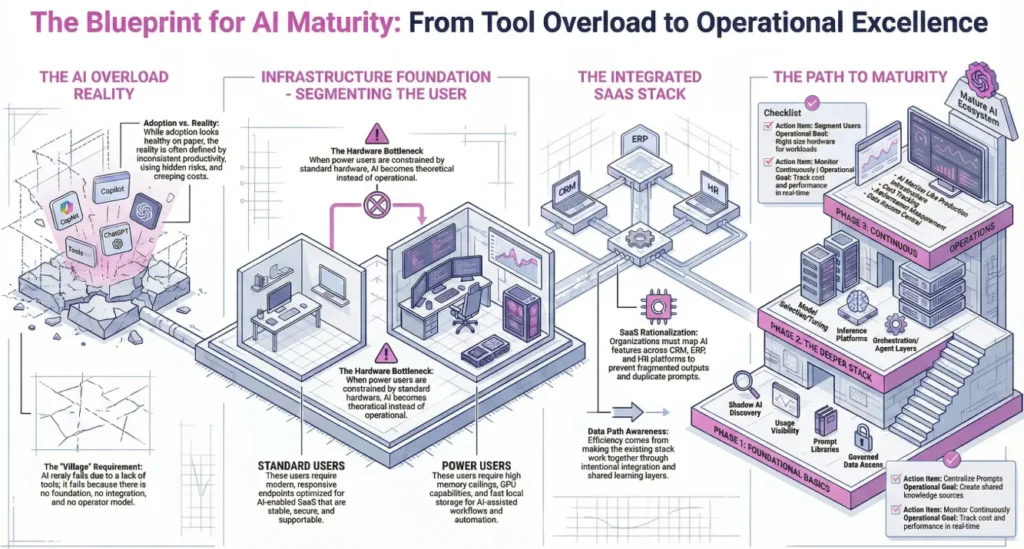

AI rarely fails because of missing tools. It fails because there is no foundation, no integration, and no operator model.

AI takes a village — and a stack — and people who can run both.

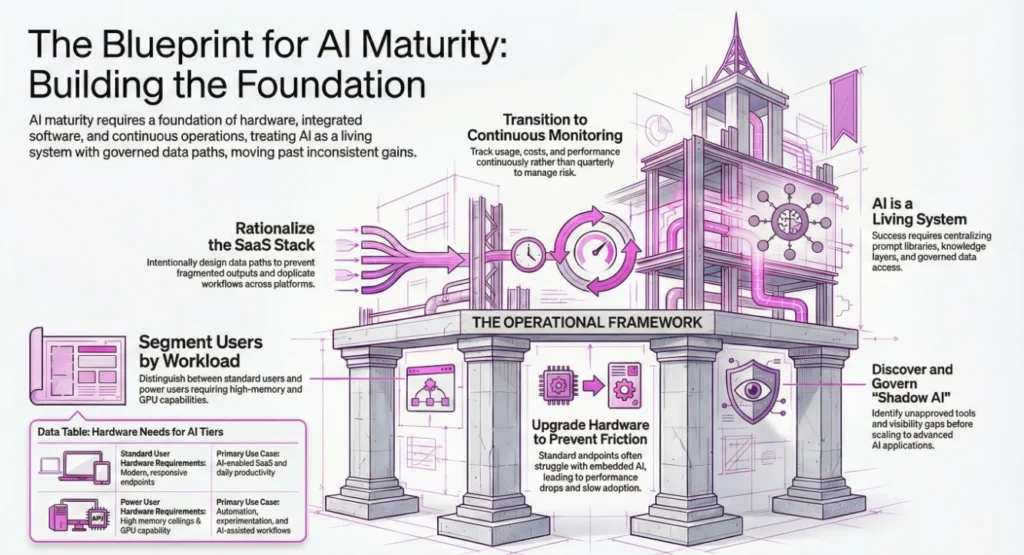

The Layer Most Companies Underestimate: Hardware

AI exposes weak infrastructure immediately.

Standard endpoints struggle once AI features are embedded across daily applications. Performance drops. Friction rises. Adoption slows.

But the bigger mistake is treating all users the same.

In practice, organizations have at least two AI user classes:

Standard users

- Need modern, responsive endpoints

- Optimized for AI-enabled SaaS

- Stable, secure, supportable

Power users

- Need high memory ceilings

- GPU capability

- Fast local storage

- Systems built for automation, experimentation, and AI-assisted workflows

When power users are constrained by hardware, AI becomes theoretical instead of operational.

The Next Reality: Your SaaS Stack Is Becoming Your AI Stack

Most companies haven’t fully internalized this yet.CRM, ERP, HR, finance, support, and marketing platforms now include embedded AI and expanding data exchange paths.

Without intentional design, this creates:

- Fragmented AI outputs

- Duplicate prompts and workflows

- No shared learning

- No governance trail

AI efficiency doesn’t come from adding more tools.

It comes from making the existing stack work together — intentionally.

That requires:

- SaaS rationalization

- AI feature mapping

- Integration design

- Data path awareness

Before “Advanced AI,” the Basics Must Exist

Every successful AI program starts with uncomfortable clarity:

- Who is using AI today?

- Which tools — approved or not?

- For what business outcomes?

- With which data sources?

That leads to foundational controls:

- Shadow AI discovery

- AI usage visibility

- Prompt libraries

- Governed data access

- Vector knowledge layers

Only after this foundation exists does the deeper AI stack make sense:

- Data layer

- Model selection and tuning

- Inference platforms

- Orchestration and agent layers

- Workflow-embedded AI applications

Skipping these steps doesn’t accelerate AI.

It amplifies risk.

The Part Most Vendors Avoid: Ongoing AI Operations

Here’s the operational truth:

AI that isn’t monitored becomes:

- Inconsistent

- Expensive

- Risky

- Eventually ignored

AI is not a feature rollout.

It’s a living system.

It requires:

- Usage monitoring

- Prompt and workflow management

- Data access control

- Cost tracking

- Performance measurement

- Continuous adjustment

Not quarterly. Continuously.

A Real-World Pattern That Works

Organizations seeing real AI gains tend to follow a similar path:

They segment users by AI workload. They upgrade endpoints accordingly. They rationalize SaaS tools before adding more. They discover Shadow AI instead of pretending it doesn’t exist. They centralize prompts and knowledge sources. They govern model and data usage. They monitor AI like production infrastructure.

The result is not “AI magic.”

It’s AI that is measurable, governable, and useful.

Three Questions Worth Asking

Do your power users have hardware that enables AI — or quietly blocks it?

Can you see, in one place, which AI tools are running across your organization today?

If AI usage doubled tomorrow, would your stack scale — or spiral?

Closing Thought

The organizations getting real value from AI didn’t just buy smarter tools.

They built the right foundation. They integrated the right stack. They put operators behind it.

That’s what AI maturity actually looks like.

✅ Cybersecurity trends

✅ AI transformation

✅ IT strategy for Banking, Financial Services, and Healthcare